Data types in machine learning – what data can ML use

The importance of machine learning

According to a recent report, in 2022, the market size was valued at USD 19.20 billion and is expected to grow to USD 225.91 billion by 2030, exhibiting a Compound Annual Growth Rate (CAGR) of 36.2% during the forecast period. This rapid expansion highlights the role played by ML in shaping industries by facilitating intelligent decision-making processes and delivering exceptional user experiences.

Data forms the foundation of ML, serving as the material that algorithms utilize for learning and making predictions. The efficacy of ML models is directly linked to the volume, relevance, and quality of the data they are based on.

In machine learning, data can come in different forms – e.g., structured data (like tables in a database), unstructured data (such as text and images), and semi-structured data (like JSON files). The variety of data types machine learning systems can handle shows how adaptable they are to different domains and challenges.

Understanding the basics of data in ML

Labeled and unlabeled data

Data is generally divided into two categories in ML: labeled and unlabeled.

Labeled data is given tags (or labels) that indicate the desired output for the ML model to predict. This type of data plays a role in supervised training, where the model is taught to make predictions based on input information. For instance, in a dataset aimed at identifying spam emails, messages would be marked as either “spam” or “not spam” to later train the models to sort emails correctly.

Unlabeled data, as you can tell from its name, lacks associated labels. It’s used in unsupervised learning scenarios where the model seeks to find patterns and structures within the data without instructions on what to predict. Unlabeled data is more abundant and simpler to gather compared to labeled data but poses challenges when it comes to deriving meaningful insights without predefined guidance.

To sum up, having a good grasp of the kinds of data machine learning can utilize and knowing how to handle and organize this data is crucial for creating ML systems. As machine learning progresses and becomes more integrated across various fields, the importance of data becomes more and more evident.

Splitting data in ML

An essential practice in machine learning is splitting your data into different subsets: training, validation, and testing sets. This process helps in assessing how well the model performs.

- The training set is employed to teach the model. This data – both input and output – your model actually sees and learns from.

- The validation set is used in the training process. During this set, the model’s settings are tuned by improving involved hyperparameters (initial parameters set before training). The validation set ends with choosing the version of the model that performs better than the others.

- The testing set is employed when the model has finished training to conduct an unbiased evaluation. The model in this set is asked to predict values based on some input without seeing the output. After this, ML engineers evaluate the model and how much it has learned based on the actual output.

Typically, a common split ratio is 70% for training, 15% for testing, and 15% for validation. However, it can vary – it all depends on the size of the dataset and project requirements. By dividing data this way, machine learning models become more reliable and effective in real-life scenarios.

It also reduces the risk of overfitting, which can happen when a model focuses on the “noise” and non-important fluctuations in the training data too much and then performs poorly on the new data.

Main data types in ML

The data used in ML can be categorized into quantitative and qualitative.

Quantitative data types: the backbone of precise analysis

In the realm of ML, quantitative data types serve as a building block for algorithms to decipher patterns, detect trends, and uncover connections. It provides a measurable and objective basis for creating ML models and is used for tasks that require precision – e.g., forecasting, regression analysis, and optimization problems.

Numerical data

Numerical data lies at the heart of quantitative data – it’s a cornerstone in ML. Essentially, it refers to data represented by numbers. This data type is further classified into discrete and continuous data.

Discrete data

This sub-type of numerical data represents countable items. A vivid example would be the application of discrete data to track user interactions on an app and reveal patterns to enhance user retention strategies. Imagine an application that studies millions of user engagements to determine the best time to send push notifications to boost interaction – that is discrete data in action.

Continuous data

Continuous data indicates measurements that can take any value within a range, such as temperatures or distances. This sub-type of numerical data can be used to monitor temperature fluctuations throughout the year to anticipate energy consumption trends.

Where is numerical data used?

- Real Estate: numerical data describes property features, such as square footage, number of bedrooms, location scores, etc. This data is used to estimate property values and predict market trends.

- Transportation and Logistics: numerical data plays a part in route optimization as it represents delivery times, vehicle capacities, and fuel consumption. This type of data is then employed to optimize routes, reducing costs and improving delivery efficiency.

- Healthcare: patient diagnostics and treatment outcomes are often predicted based on numerical data, such as lab results and vital sign measurements.

Time-series data

Time series data stands out as a category of quantitative data and captures information as a series of points over a certain time period. This data is often collected at consistent intervals and then compared (day to day, week to week, month to month changes).

The main difference between numerical and time-series data lies in the fact that the latter has clear starting and ending points, and numerical data is just a group of numbers that aren’t attached to specific time periods. This type of data proves invaluable in projecting market trends, empowering models to forecast stock prices with precision.

Where is time-series data used?

- Finance: for portfolio management and stock price forecasting – time-series data is used in developing models that predict future stock movements based on historical trends. This helps investors make informed decisions.

- Retail: for inventory management and sales prediction – analyzing time-series data of past sales helps retailers forecast future demand. This method ensures optimal stock levels.

- Manufacturing: time-series data is employed for predictive maintenance – machinery sensor data is analyzed to predict equipment failures before they occur, reducing downtime and maintenance costs.

Qualitative data types: the world beyond numbers

In machine learning, qualitative data refers to non-numeric information that provides valuable insights into patterns, behaviors, and opinions that cannot be covered by quantitative data type. This data type is crucial for tasks that require interpretation – e.g., sentiment analysis and content recommendation.

Categorical data

This data type is used to divide information into distinct groups or categories, which are fundamental for classification tasks in ML. Categorical data can include gender, nationality, types of cars, education levels, and more.

Where is categorical data used?

- E-commerce: this data type is used to classify products into various categories, enhancing search and recommendation systems.

- Marketing: understanding customer segments through categorical data allows for targeted advertising and personalized marketing campaigns.

Text data

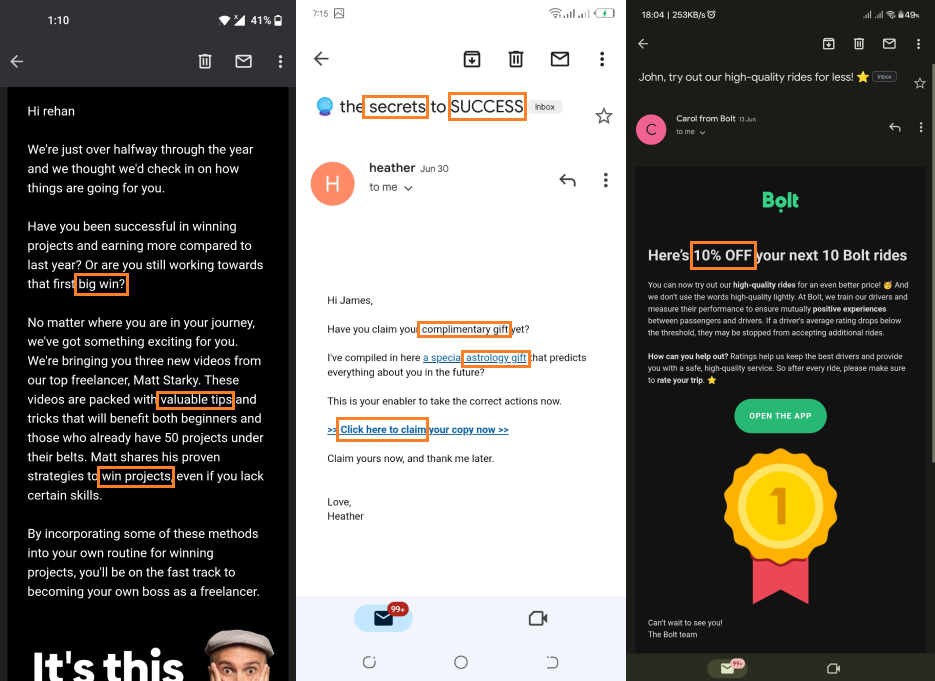

Text information covers a range of textual content, including media posts and emails, as well as detailed reports and books. Natural Language Processing (NLP) methods are employed to examine and make sense of text data, allowing machines to comprehend human language.

Where is text data used?

- Customer Service: chatbots and virtual assistants employ text data to provide automated support to customers.

- Social Media: sentiment analysis on user-generated content helps brands review public opinion and market trends.

Image data

Image data is very popular in computer vision tasks, where ML models are trained to analyze and interpret visual information from digital images.

Where is image data used?

- Healthcare: The diagnostic model uses image data to analyze, for example, X-rays and MRI, identifying and classifying diseases.

- Autonomous vehicles: self-driving cars rely on real-time image data for navigation and spotting obstacles.

Audio data

Audio data encompasses a range of sound recordings – from human speech to environmental sounds. This data type is widely applied in speech recognition and sound analysis.

Where is audio data used?

- Tech: voice-activated devices and speech recognition systems are integrated into smartphones, and home assistants rely on audio data to understand and execute user commands.

- Entertainment: music streaming services employ audio data to recommend songs or curate playlists based on user preferences.

Video data

Video data is a mix of audio and image data. It’s particularly useful for applications that require an understanding of movements, actions, or changes over time.

Where is video data used?

Security: video surveillance systems use video data to monitor and identify suspicious activities and recognize threats.

Sports analytics: performance analysis tools use video data to provide athletes with insights into their techniques and strategies.

Exploring types of data in machine learning reveals a landscape that offers numerous opportunities for algorithms to learn from the human world.

Quantitative data formats offer the accuracy needed for analysis, while qualitative data types provide depth and context, allowing machines to understand environmental factors. From the precision of numerical analysis to the depth of insight provided by text and image data, the applications of ML continue to expand, driven by the quality and variety of data we collect.

Advanced and emerging data types in ML

In the ever-changing realm of machine learning (ML), the introduction of advanced and emerging data types has ushered in new possibilities for exploration, innovation, and real-world implementations.

Sensor data

Sensor data comes from devices or tools that notice alterations in surroundings or within a system. It includes a variety of inputs: temperature, movement, humidity, pressure, or light. This type of data connects two worlds – the digital and the physical.

The Internet of Things (IoT) has significantly fueled the expansion of sensor data usage, as billions of interconnected devices generate large amounts of data.

This has sparked advancements in processing and analyzing data, allowing for real-time insights and actions. For example, in agriculture, sensors monitoring soil and weather conditions provide information to irrigation systems, calculating water usage based on the needs of crops and current weather patterns.

Where is sensor data used?

- Healthcare: wearable devices collect data like heart rate and blood oxygen levels, allowing for the monitoring of patients outside traditional medical settings. For instance, smartwatches that track heart rate variability (HRV) can accurately forecast stress levels, offering a non-invasive method for monitoring mental well-being.

- Smart Cities: sensor data plays a role in crafting smart city solutions by optimizing traffic patterns based on real-time vehicle and pedestrian data or monitoring environmental factors like air quality across various city areas.

Graph data

This is a database that uses graph structures for semantic queries with nodes, edges, and properties to represent and store data. Basically, it focuses on the relationships and connections between entities, represented as nodes and edges in a graph structure.

Graph data is invaluable for understanding complex networks – from internet infrastructure and social interactions to neural pathways and financial transactions.

Where is graph data used?

- Pharmaceuticals: during the process of creating a new drug, specialists use graph data to comprehend the connections among genes, proteins, and other chemical compounds. For instance, models based on graph data have played a role in pinpointing existing drugs that can be repurposed to treat diseases by studying how they impact biological networks related to those diseases.

- Cybersecurity: in this field, graph data helps detect and analyze threats. It does so by mapping the relationships between different entities in a network. This approach can uncover unusual patterns that signify security breaches – e.g., unusual connections or data flows within a network.

Cutting-edge data types like sensor data and graphs are leading the way in enhancing the capabilities of machine learning models. By introducing layers of data analysis, they enable more nuanced examinations and applications across various fields. With technological advancements progressing, these types of data are expected to play pivotal roles in fostering innovation and addressing some of the most complex challenges across different sectors.

Things to consider when using data in ML

Data quality and preprocessing

Every machine learning project starts with data, and ensuring its quality is crucial. Good quality data leads to meaningful insights – that’s why data cleaning and preprocessing are vital. These processes involve addressing missing values, removing duplicates, and correcting errors that could skew results and make predictions unreliable if left unattended. Techniques like binning, regression, and clustering are also essential for improving data quality.

According to a study conducted by IBM, poor data quality results in a cost of $3.1 trillion to the U.S. – the economic impact of this issue is tremendous. Additionally, feature engineering – the process of converting data into a suitable format for modeling – plays a key role in improving the performance of models. It often requires expertise in a specific domain and creative problem-solving skills.

Data privacy and security

In a time where data breaches regularly make headlines, the importance of privacy and security can’t be overstated. The ethical implications of using sensitive information in machine learning projects call for protective measures.

Regulations such as GDPR in the EU and CCPA in California have established benchmarks for data privacy standards, prompting machine learning professionals to implement anonymization techniques and secure storage methods.

Moreover, the idea of differential privacy involves introducing “noise” to datasets while making sure that the removal or addition of a single individual’s data does not significantly affect the overall outcome. This way, differential privacy protects against personal data from the published data or analysis results.

Scalability and storage

The amount of data globally is expected to hit 180 zettabytes by 2025, with a big part of it dedicated to machine learning. This rapid expansion highlights the need for storage solutions that can grow with the demand.

Scalable storage solutions have seen a 50% year-by-year rise in adoption by businesses utilizing ML. Cloud platforms specifically have become key players in scalability, providing storage and computing capabilities that allow machine learning initiatives to expand operations without the need for significant initial investments in physical infrastructure.

As datasets expand in both size and complexity, coping with scalability and storage poses hurdles. Cloud computing services offer storage solutions and computing resources that empower ML models. Also, technologies like data lakes and distributed file systems (such as Hadoop) enable enterprises to store and manage petabytes of data efficiently.

Dataset resources for ML engineers

The quest for high-quality datasets has led ML engineers to search for various repositories to find the one that fits their needs. Here are some of the dataset resources used by machine learning professionals for different purposes.

Kaggle

Kaggle is a large, supportive AI and ML community with over 5 million data scientists sharing, stress testing, and staying up-to-date on all the latest ML techniques. Kaggle hosts more than 299,000 datasets and 2,300 pre-trained, ready-to-deploy ML models spanning various domains and fields, including healthcare and retail.

This platform has democratized access to data and powered innovation – the accuracy of the models trained on Kaggle’s datasets often surpasses industry benchmarks by 10-15%. It serves as a platform where data scientists can delve into data, analyze it, and exchange insights, fostering a community focused on learning and collaboration.

UCI Machine Learning Repository

The UCI Machine Learning Repository houses a collection of databases, domain theories, and data generators. They are widely used by the academic community for empirical analysis of ML algorithms. UCI was launched as an FTP archive in 1987 by a PhD student David Aha. Since then, it has been widely used by students, educators, and researchers all over the world as a primary source of machine learning datasets.

This repository organizes datasets based on the type of machine learning task they are best suited for (e.g., classification, regression), making it simpler for researchers to locate data for their studies.

Google Dataset Search

Functioning as a search engine for datasets, Google Dataset Search allows users to discover free data sources available across the internet. Whether you seek government statistics, scientific findings, or historical archives, Google Dataset Search can help you with anything.

Google Dataset Search complements Google Scholar, the company’s search engine for academic studies and reports.

Dataset Search can filter results based on the desired type of data (for example, focusing on images or text).

AWS Public Datasets

Amazon Web Services offers a program that shares public datasets for anyone to use. These datasets are optimized specifically for storage and processing capabilities, streamlining large-scale data analysis tasks by minimizing time and technical obstacles.

These datasets are compiled into Amazon’s Registry of Open Data on AWS. Looking up datasets is easy with an intuitive search function, dataset descriptions, and usage examples.